Documentation Index

Fetch the complete documentation index at: https://docs.synq.io/llms.txt

Use this file to discover all available pages before exploring further.

This guide shows you how to connect your dbt Core project to Coalesce Quality to track model runs, test results, and metadata changes.Prerequisites:

- Admin access to your Coalesce Quality workspace

- Ability to modify your dbt orchestration tool (Airflow, GitHub Actions, etc.)

Using dbt Cloud? You can integrate directly through Settings → Integrations → Add Integration → dbt Cloud instead of following this guide.

Set up dbt Core integration

Create integration in Coalesce Quality

- Navigate to Settings → Integrations → Add Integration

- Select dbt Core from the integration options

Configure integration settings

Integration name: Enter a descriptive name (e.g.,Production dbt Core)

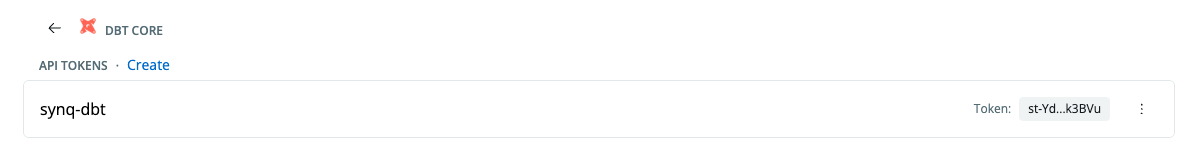

Generate token: Click Create to generate your integration token. You’ll use this token with the synq-dbt tool to send artifacts securely to Coalesce Quality.

Git integration: Select your Git provider to link model changes to repository commits. This enables change tracking and lineage visualization.

Relative path to dbt: If your dbt project isn’t in the repository root, specify the directory path (e.g., analytics/dbt/).

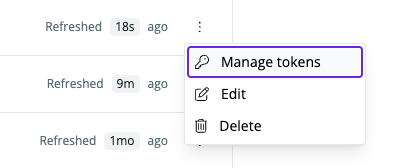

Manage integration tokens

Access token management through Settings → Integrations, then select your dbt Core integration and click Manage tokens.

- Create new tokens for different environments

- Invalidate compromised tokens

- Copy token snippets for easy integration

Install synq-dbt

About synq-dbt

synq-dbt is a command-line wrapper that runs your existing dbt Core commands and automatically uploads artifacts to Coalesce Quality. It’s version-agnostic — working with any dbt Core version by passing all arguments directly to your installed dbt — and integrates seamlessly with orchestration tools like Airflow, GitHub Actions, and Dagster.

Collected artifacts:

manifest.json— Project structure and dependenciesrun_results.json— Execution status and performance metricscatalog.json— Complete data warehouse schema informationsources.json— Source freshness test results

- Executes your locally installed dbt Core with all provided arguments (version-agnostic, passes arguments directly)

- Captures the original dbt exit code

- Reads your

SYNQ_TOKENenvironment variable - Uploads artifacts from the target directory to Coalesce Quality

- Returns the original dbt Core exit code, even if upload fails (preserving pipeline behavior and ensuring CI/CD reliability)

Installation methods

Choose the installation method that matches your dbt orchestration setup:Airflow with DockerOperator

-

Set environment variable: In Airflow UI, create a new environment variable

SYNQ_TOKENwith your integration token. - Update Dockerfile:

- Update your operator:

Linking dbt models to Airflow tasks: To automatically link your dbt models with the Airflow tasks that execute them, see the Airflow + dbt Core Linking guide. This enables bidirectional visibility between your orchestration and data layers.

Airflow with dbt Plugin

-

Set environment variable: Create

SYNQ_TOKENin Airflow UI. - Install synq-dbt:

- Update DbtOperator:

For linking dbt models to Airflow tasks, see the Airflow + dbt Core Linking guide.

Dagster

-

Configure environment: Add

SYNQ_TOKEN=<your-token>to your.envfile. For US region workspaces, also addSYNQ_API_ENDPOINT=https://api.us.synq.io. -

Update resources in

definitions.py:

- Update assets in

assets.py:

Docker

Add to your Dockerfile:Linux/macOS

Download and install:Use synq-dbt

Basic usage

Replace your existing dbt Core commands withsynq-dbt:

Upload existing artifacts

If you have already generated dbt artifacts and want to upload them to Coalesce Quality:Configuration options

Environment variables:SYNQ_TOKEN— Your integration token (required)SYNQ_TARGET_DIR— Artifact directory path (default:target/)SYNQ_API_ENDPOINT— API endpoint for your region (required for US region)

EU region customers (default) don’t need to set

SYNQ_API_ENDPOINT. US region customers must configure it.https://developer.synq.io.

Network requirements:

- Allow outbound HTTPS traffic to

developer.synq.io:443(EU region) orapi.us.synq.io:443(US region)

- Artifacts appear in Coalesce Quality within minutes of upload under normal conditions

- Failed uploads are logged and can be retried

- Typical payload sizes range from several megabytes to tens of megabytes depending on project size

For advanced configuration options and troubleshooting, see the synq-dbt GitHub repository.